Welcome to the future, where technology arrived first and accountability had to catch up later.

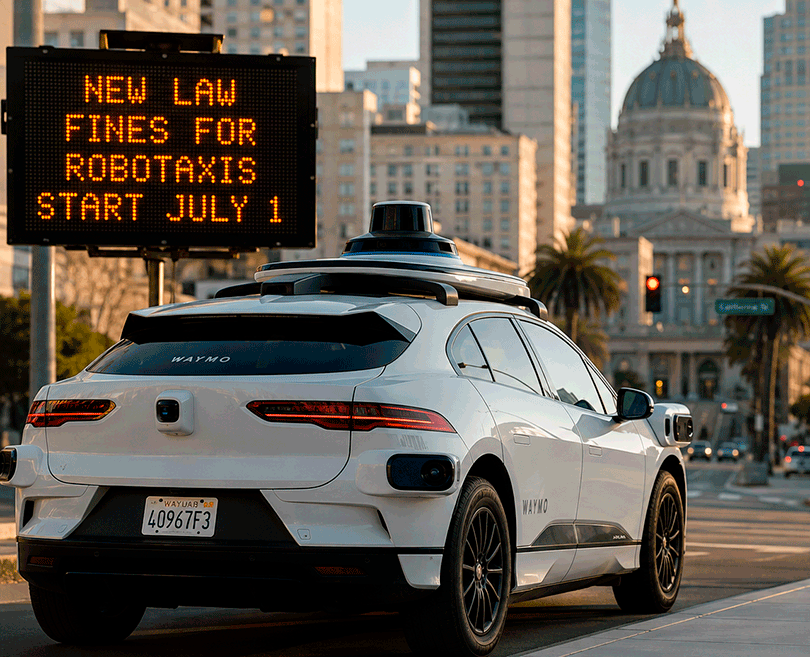

California, the state that spent decades selling the world a vision of innovation, freedom, and the future, just hit the brakes. Starting July 1, autonomous vehicles, including robotaxis like Waymo, will face the toughest regulations in the United States. Police will now be able to document traffic violations, manufacturers will receive official notices, and the DMV will investigate what happened.

And then it gets serious.

- Fleet size restrictions.

- Route limitations.

- Speed controls.

- Even full suspension of operating permits.

This is no small policy adjustment.

This feels more like a warning shot to the entire tech industry.

And this is where it gets truly fascinating.

For years, we were taught to fear human drivers.

The drunk driver.

The distracted driver.

The exhausted driver checking a phone at 70 miles per hour.

Then came the promise of AI.

Machines would be colder. More precise. Smarter. Safer.

But here’s the paradox.

When a human makes a mistake, one person is responsible.

When a machine makes a mistake, the system is responsible.

And when that system makes mistakes at scale, the question becomes much bigger.

Who allowed it onto public roads in the first place?

That changes everything.

For the first time, the government is not just watching innovation unfold.

It is saying something far more powerful.

If your algorithm creates danger, your business can be stopped.

This is no longer just a transportation story.

This is a power story.

For years, major tech companies followed the same pattern.

Launch first.

Scale fast.

Let society figure out the consequences later.

Social media exploded before privacy laws caught up.

Short term rentals transformed cities before housing systems adapted.

Electric scooters flooded sidewalks before regulation existed.

And now robotaxis are following the same script.

Technology often moves faster than law because innovation is rewarded for speed, not caution.

But autonomous vehicles may be where that formula finally starts to break.

Because this is not just another app.

This is thousands of pounds of moving metal making split second decisions in real traffic.

- Rain.

- Children crossing.

- Cyclists.

- Construction zones.

- Emergency vehicles.

- Unexpected human behavior.

This is not code living on a screen.

This is code making physical choices in public spaces.

For years, people asked, “Can self driving cars work?”

But maybe the more important question was always this.

Who pays the price when they don’t?

And here’s the uncomfortable truth beneath all of it.

We live in a time when technological capability is accelerating faster than our moral readiness to manage it.

Progress used to feel like improvement.

Now it often feels like a live experiment being tested on society.

We are promised convenience.

Lower labor costs.

Faster rides.

Less human error.

But convenience often hides a dangerous reality.

Human mistakes are individual.

Systemic mistakes can multiply instantly.

One reckless driver is dangerous.

A flawed autonomous fleet can become a citywide issue.

That is why California’s decision matters far beyond traffic tickets.

This is one of the first major signals that society is beginning to say something Silicon Valley has rarely heard clearly enough:

You do not get to beta test the future on the public without consequences.

And symbolically, that matters.

The same state that helped build the world’s biggest technology dreams is now becoming one of the first to aggressively define their limits.

That feels less like resistance.

And more like maturity.

Because every major innovation follows the same three stages.

Excitement.

Mass adoption.

Regulation.

Cars once symbolized freedom. Then came licenses, seatbelts, insurance, and speed limits.

The internet symbolized openness. Then came privacy battles, moderation, and digital law.

AI today feels revolutionary. But history suggests something important.

Every powerful tool eventually faces one unavoidable question.

Where are the boundaries?

And this is bigger than autonomous vehicles.

Robotaxis are just the mirror.

Because society keeps repeating the same mistake.

We often ask whether we can build something long before we ask what happens when it fails.

New algorithms.

New platforms.

New AI systems.

New automation.

We love innovation speed.

We rarely love discussing failure cost.

But the cost always arrives eventually.

Sometimes as lost privacy.

Sometimes as addiction.

Sometimes as displaced jobs.

Sometimes as physical risk.

That is why this story resonates so deeply.

This is not just about traffic laws.

This is about the end of blind technological trust.

We are entering the age of technological accountability.

The question is shifting.

Not “Is it innovative?”

But “Is it safe?”

Not “Can we launch it?”

But “Are humans truly ready for its consequences?”

And maybe that is the most important shift of all.

Science fiction taught us to fear machines taking control.

Reality is proving something more subtle.

The real danger may not be that machines become too powerful.

It may be that humans hand over power too quickly without building systems strong enough to control it.

One of the biggest illusions of modern life is believing that technological automatically means better.

It doesn’t.

Technological means new.

And new still has to prove it is safer, wiser, and more trustworthy.

California may simply be the first place saying out loud what much of the world will eventually have to confront.

Progress should not be feared.

But progress should never be treated as infallible.

Because a future without accountability may not be innovation at all.

It may just be a very expensive mistake.

And here is the real insight that makes this story personal for everyone.

We are no longer deciding whether technology will shape our lives.

That decision has already been made.

What we are deciding now is something even bigger.

Will we remain the architects of technology?

Or will we become its testing ground?

Because if society does not ask hard questions early enough, consequences will answer them later.

The future does not begin when a driverless car appears on your street.

The future begins when society decides the rules under which it is allowed to stay there.

So maybe the most important button in tomorrow’s car is not “Start.”

Maybe it’s “Stop.”

Because real progress does not begin with speed.

It begins with responsibility.